Dear Secretary Psaki and the Office of the Press Secretary, My name is Alexander B Howard; you may have noticed me tweeting at you this past couple months during the transition and now the administration. I came to DC over … Continue reading

Dear Secretary Psaki and the Office of the Press Secretary, My name is Alexander B Howard; you may have noticed me tweeting at you this past couple months during the transition and now the administration. I came to DC over … Continue reading

I won’t bury the lede on this story: today is my first day at the Sunlight Foundation as a senior analyst. I’m enormously excited to be joining an organization that’s been at the heart of a global movement towards opening governments to the people they serve with technology, from open source to open data.

If you’ve followed my writing and interests over the past decade, you know that I’m passionate about open government in all of its forms. I’ve been humbled to meet thousands of people around the world who are deeply committed to public service and improving how government functions.

This is a natural fit. From improving public access to information to civic engagement to collaboration around code to participation in democratic governance processes, from regulations to legislation, the Sunlight Foundation has been at the cutting edge of making government more open, effective and accountable.

There’s also a personal reason I made this decision: Jake Brewer, a former Sunlighter and White House staffer who we lost far too early last year, frequently urged me to to make the most of my short time on Earth. This is the right place for me to be.

Long-time readers should expect me to continue writing and participating in this role, creating acts of advocacy journalism in the public interest.

I believe that people have a right to know what is being done in their name by their elected governments. Implicit in that view is the notion that representative democracy is the worst form of government, save for all the rest. It’s up to us to protect and improve the states that we have founded and fought to preserve.

As people who have been paying close attention to Sunlight know, it’s an organization in transition. I’m proud to join up with this open government “restartup”, pitching in where ever my talents are helpful. I believe 2016 is going to be a dynamic year at Sunlight, which is why I’ve thrown in my lot with the extraordinary folks on staff.

I hope that you will continue to send your thoughts, feedback, suggestions, tips and ideas my way in the days and months to come.

On September 23, 2014, the White House announced that the United States would create an official policy for open source software. Today, the nation took a big step towards making more software built for the people available to the people.

On September 23, 2014, the White House announced that the United States would create an official policy for open source software. Today, the nation took a big step towards making more software built for the people available to the people.

“We believe the policies released for public comment today will fuel innovation, lower costs, and better serve the public,” wrote U.S. chief information officer Tony Scott in a blog post at WhiteHouse.gov, announcing that the Obama administration had published a draft open source policy and would now take public comments on it online.

This policy will require new software developed specifically for or by the Federal Government to be made available for sharing and re-use across Federal agencies. It also includes a pilot program that will result in a portion of that new federally-funded custom code being released to the public.

Through this policy and pilot program, we can save taxpayer dollars by avoiding duplicative custom software purchases and promote innovation and collaboration across Federal agencies. We will also enable the brightest minds inside and outside of government to review and improve our code, and work together to ensure that the code is secure, reliable, and effective in furthering our national objectives. This policy is consistent with the Federal Government’s long-standing policy of technology neutrality through which we seek to ensure that Federal investments in IT are merit-based, improve the performance of our Government, and create value for the American people.

Scott highlighted several open source software projects that the federal government has deployed in recent years, including a tool to find nearby housing counselors, NotAlone.gov, the College Scorecard, data.gov, and an online traffic dashboard. platform, and the work of 18F, which publishes all of its work as free and open software by default.

The draft policy is more limited than it might be: as noted by Greg Otto at Fedscoop, federal agencies will be required to release 20 percent of newly developed code as open source.

As Jack Moore reports at NextGov, the policy won’t apply to software developed for national security systems, a development that might prove disappointing to members of the military open source community that has pioneered policy and deployment in this area.

The draft policy sensibly instructs federal agencies to prioritize releasing of code that could have broader use outside of government.

The federal government is now soliciting feedback to the following considerations regarding its use of open source software.

Considerations Regarding Releasing Custom Code as Open Source Software

- To what extent is the proposed pilot an effective means to fuel innovation, lower costs, benefit the public, and meet the operational and mission needs of covered agencies?

- Would a different minimum percentage be more or less effective in achieving the goals above?

- Would an “open source by default” approach that required all new Federal custom code to be released as OSS, subject to exceptions for things like national security, be more or less effective in achieving the goals above?

- Is there an alternative approach that OMB should consider?

- What are the advantages and disadvantages associated with implementing this type of pilot program? To what extent could this policy have an effect on the software development market? For example, could such a policy increase or decrease competition among vendors, dollar amounts bid on Federal contracts, or total life-cycle cost to the Federal Government? How could it impact new products developed or transparency in quality of vendor-produced code?

- What metrics should be used to determine the impact and effectiveness of the pilot proposed in this draft policy, and of an open source policy more generally?

- What opportunities and challenges exist in Government-wide adoption of an open source policy?

- How broadly should an open source policy apply across the Government? Would a focus on particular agencies be more or less effective?

- This policy addresses custom code that is created by Federal Government employees as well as custom code that is Federally-procured. To what extent would it be appropriate and desirable for aspects of this draft policy to be applied in the context of Federal grants and cooperative agreements?

- How can the policy achieve its objectives for code that is developed with Government funds while at the same time enabling Federal agencies to select suitable software solutions on a case-by-case basis to meet the particular operational and mission needs of the agency? How should agencies consider factors such as performance, total life-cycle cost of ownership, security and privacy protections, interoperability, ability to share or reuse, resources required to later switch vendors, and availability of support?

If you have thoughts on any of these questions, you can email sourcecode@omb.eop.gov,

participate in discussions on existing issues on Github, start a new one, or make a pull request to the draft policy on Github. You can see existing pull requests here and view all comments received here.

With this policy, the White House has fulfilled one of the commitments added to the second National Action Plan for open government in the fall of 2014. While there has been limited progress (or worse) on of the dozens of other new and old commitments made in the three action plans published to date, this draft open source policy is a historic recognition of the principle that the source code for software developed by government agencies or contractors working for them can and should be released to other agencies and the general public for use or re-use.

Over at Govfresh, Luke Fretwell took note of the White House asking for feedback on the open government section of WhiteHouse.gov. Yesterday, Corinna Zarek, senior advisor for open government in the White House Office of Science and Technology Policy (OSTP), where the administration’s Open Government Initiative was originally spawned under former deputy chief technology officer Beth Noveck, published a email to the US Open Government Google Group:

We are working on a refresh of the Open Gov website, found at whitehouse.gov/open, and we’d like your help!

If you’re familiar with the history of the page, you can see we have begun updating it by shifting some of the existing content and adding new tabs and material.

What suggestions do you have for the site? What other efforts might we feature?

Please let us know – reply back to this thread, email us at opengov@ostp.gov, or tweet us at @OpenGov!

Here’s some background on the group and its purpose: The White House’s Open Government Working Group needs to solicit feedback from civil society in the United States on the various initiatives and commitments the administration has made. Such engagement is essential to the providing feedback from governance experts, advocates and the public on the development of new agency open government plans and discuss progress on the national open government action plan.

As a result of a discussion at the working group this spring, OSTP created the US Open Government discussion group to connect White House staff and agency officials who work on open government to people outside of the federal government. According to the group’s description, the goal of this group is to “provide a safe and welcoming arena for government-focused collaboration and news-sharing around Open Government efforts of the United States government.” That “safe and welcoming” language is notable: the group is moderated by OpenTheGovernment.org with an eye on constructive, on-topic feedback, as opposed to, say, the much more open-ended freewheeling posts and threads on the (long-since closed) Open Government Dialog of 2009 or Change.gov.

After almost six months, the open government group, which can be accessed through a Web browser or using an email listserv, has 177 members and 37 posts. By almost any measure, these are extremely low levels of participation and engagement, although the quality of feedback from those members remains extremely high. By way of contrast, a open government and civic tech group on Facebook now has over 1900 members and an open government community on Google+ has over 1400 members, with both enjoying almost daily contributions. Low participation rates on this US Open Government Google Group are likely due in part to lack of promotion by other White House staff to the media or using the various social media platforms has joined, which cumulatively have millions of followers, and, more broadly, the historic lows of public trust in government which have created icy headwinds for open government initiatives in recent years.

So far, Zarek’s solicitation has received two responses. One comes from Daniel Schuman, policy director for Citizens for Responsibility and Ethics (CREW) in Washington, who made great suggestions, like adding a link to ethics.data.gov, a list of staff working on openness in the White House and their areas of responsibility, a link to 18f and the USDS.

“Finally, there are many great ideas about how to make government more open and transparent,” wrote Schuman. “Consider including a way for people to submit ideas where those submissions are also visible to the public (assuming they do not violate TOS). Consider how agencies or the government could respond to these suggestions. Perhaps a miniature version of “We the People,” but without the voting requiring a response.”

The other idea comes from open government consultant Lucas Cioffi, who suggested adding a link to a “community-powered open government phone hotline” like the experiment he recently created.

To those ideas, I’ll add eight quick suggestions in the spirit of open government:

1) Reinstate the open government dashboard that was removed and update it to the current state of affairs and compliance, with links to each. The Sunlight Foundation and CREW have already audited agency compliance with the Open Government Directive. By keeping an updated scorecard in a prominent place, the Obama administration could both increase transparency to members of the public wondering about what has been done and by whom, and put more pressure on agencies to be accountable for the commitments they have made.

2) Re-integrate individual case studies from the “Innovator’s Toolkit,” which was also removed, under participation and collaboration

3) Create a Transparency tab and link to the “IC on the Record” tumblr and other public repositories for formerly secret laws, policies or documents that have been released.

4) Blog and tweet more about what’s happening in the open government world outside of the White House. Multiple open government advocates do daily digests and there’s a steady stream of news and ideas on the #opengov and #opendata hashtags on Twitter. Link to what’s happening and show the public that you’re reading and responding to feedback.

5) Link to the White House account and open government projects on Github under both the new participation and collaboration tabs, like Project Open Data.

6) Highlight 18F’s effort to reboot the Freedom of Information Act.

7) Publish the second national action plan on open government as HTML on the site, and post and link to a version on Github where people can comment on it.

8) Create a FAQ under “participation” that lists replies to questions sent to @OpenGov

If you have ideas for what should be wh.gov/open, well, now you know who to tell, and where.

18F, the federal government’s new IT development shop, has launched a new look at the Freedom of Information Act (FOIA) in the form of a open source application hosted on Github. Today’s announcement is the most substantive evidence yet that the Obama administration will indeed modernize the Freedom of Information Act, as the United States committed to doing in its second National Action Plan on Open Government. Given how poor some of the “FOIA portals” and underlying software that supports them exists is at all level of government, this is tremendous news for anyone that cares about the use of technology to support open government.

Notably, 18F already has a prototype (pictured above) online that shows what a consolidated request submission hub could look like and plans to iterate upon it. This is a perfect example of “lean government,” or the application of lean startup principles and agile development to the creation of citizen-centric services in the public sector. Demonstrating its commitment to developing free and open source software in the open, 18F asked the public to follow the process online at their FOIA software repository on Github, send them feedback or even contribute to the project.

18F has now committed to creating software that improvse how requests made under the Freedom of Information Act can be improved through technology. Specifically that it will develop tools that “improve the FOIA request submission experience,” “create a scalable infrastructure for making requests to federal agencies” and “make it easier for requesters to find records and other information that have already been made available online.”

According to 18F’s blog post, this work is supported and overseen by a “FOIA Task Force,” consisting of representatives from the Department of Justice, Environmental Protection Agency, the Office of Management and Budget, the Office of Science and Technology Policy. The task force will need to focus upon more than technology: while poor software has hindered requests and publishing, that’s not the primary issue that’s hindering the speed or quality of responses.

Despite the U.S. attorney general’s laudable commitment to a new era of open government in 2009, the Obama administration received a .91 GPA in FOIA compliance earlier this year from the Center for Effective Government.

While White House press secretary Josh Earnest may be well correct in stating that the federal government is processing more FOIA requests than ever, As the National Security Archive noted in March, the use of a FOIA exemption (protecting “deliberative processes”) to deny or heavily redact requests has skyrocketed in the past two years.

[NATIONAL SECURITY ARCHIVE: Chart created by Lauren Harper.]

As with the reduced access to government staff and scientists that a group of 38 journalism and open government advocates decried earlier this year, improving FOIA compliance cannot solely be addressed through technological means. To address endemic government secrecy and outright abuse of exemptions to protect against politically inconvenient disclosures, Obama administration — in particular, the U.S. Justice Department — will need to expend political capital and push agencies to actually shift the cultural default towards openness and release uncomfortable or embarrassing data and documents and not redact them beyond understanding.

That’s admittedly a huge challenge, particularly for an administration facing multiple foreign and domestic conundrums, including a scandal over missing IRS emails and obfuscated records in an election year and the most politically polarized Congress and electorate in the nation’s history, but if President Barack Obama is truly committed to “creating an unprecedented level of openness in government,” it’s one that he and his administration will need to take on.

Last week, the White House took a victory lap for a novel event in U.S. history, when a bill that had its genesis as an online petition to the United States government filed at WhiteHouse.gov became law after the 113th Congress actually managed to passed a bill.

In a blog post explaining how cell phone locking became legal, Ezra Mechaber, deputy director of email and petitions in the White House Office of Digital Strategy, noted that this outcome “marked the very first time a We the People petition led to a legislative fix.” Mechaber also highlighted continued growth for the national e-petition platform: 15 million users, 22 million signatures and 350,000 petitions since it was launched in 2011.

Mechaber also mentioned two other things worth highlighting: “a simplified signing process that removes the need to create an account just to sign a petition” and a Write API that will “eventually allow people to sign petitions using new technologies, and on sites other than WhiteHouse.gov.” If and when that API goes live, I expect user growth and activity to spike again. Imagine, for instance, if people could sign petitions from within news stories or though Change.org. Enabling petition creators to have more of a relationship with signatories would also address one of the principal critiques levied against the site’s function. Professor Dave Karpf:

Launching the online petition at We The People created the conditions for a formal response from the White House. That was a plus. We The People provided no help in amplifying the petitions through email and social media. That was neutral in this case, since Reddit, EFF, Public Knowledge, and others were helping to amplify instead. But the site left the petition-creators with no residual list for follow-up actions. That’s a huge minus.

If the petition had been launched through a different site (like Change.org), then it would have been less likely to get a formal White House response, but more likely to facilitate the follow-up actions that Khanna/Howard, Wiens and Khanifar say are vital to eventual success.

The White House has not provided a timeline for when the beta API will become public. If they respond to my questions, I’ll update this post.

The White House officially launched a U.S. Digital Service today, promising to deliver “customer-focused government through smarter IT. The new Digital Service will be “a small team made up of our country’s brightest digital talent that will work with agencies to remove barriers to exceptional service delivery,” according to a blog post by Beth Cobert, deputy director for management at the Office of Management and Budget (OMB), U.S. chief information officer Steve VanRoekel and US chief technology officer Todd Park.

We are excited that Mikey Dickerson will serve as the Administrator of the U.S. Digital Service and Deputy Federal Chief Information Officer. Mikey was part of the team that helped fix HealthCare.gov last fall and will lead the Digital Service team on efforts to apply technology in smarter, more effective ways that improve the delivery of federal services, information, and benefits.

The Digital Service will work to find solutions to management challenges that can prevent progress in IT delivery. To do this, we will build a team of more than just a group of tech experts – Digital Service hires will have talent and expertise in a variety of disciplines, including procurement, human resources, and finance. The Digital Service team will take private and public-sector best practices and help scale them across agencies – always with a focus on the customer experience in mind. We will pilot the Digital Service with existing funds in 2014, and would scale in 2015 as outlined in the President’s FY 2015 Budget.

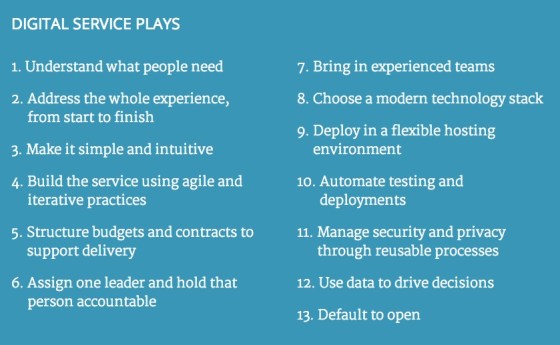

The USDS goes live with a Digital Services Playbook and “TechFAR,” a subsection of the guide that “highlights the flexibilities in the Federal Acquisition Regulation (FAR) that can help agencies implement ‘plays’ from the Digital Services Playbook.”

In what now appears to be de rigueur for information technology and digital government initiatives in the second term of the Obama administration, the playbook has been published on the White House account on Github, where the public is encouraged to give feedback and make suggestions upon the documents using GitHub Issues and to propose changes to the playbook by submitting a pull request. According to the Github account, pull requests that are made and accepted before September 1, 2014 “will be incorporated into the next release of the Digital Services Playbook and the TechFAR Handbook.”

While a team of 25 folks in OMB led by former Googler Mikey Dickerson and a playbook will not prevent the next healthcare.gov debacle, there’s a lot that’s good here.

As some guy wrote in November 18, 2013: “If Obama now, finally, fully realizes how much of an issue the broken state of government IT procurement is to federal agencies fulfilling their missions in the 21st century, he’ll use the soft power of the White House to convene the smartest minds from around the country and the hard power of an executive order to create the kernel of a United States Digital Services team built around the DNA of the CFPB: digital by default, open by nature.”

This isn’t quite that – the USDS looks like more of a management consulting shop, vs the implementation and building than the Presidential Innovation Fellows and folks at 18F, but maybe, all together, they’ll add up to much more than the sum of their parts.

At 18F, Uncle Sam is hoping to tap the success of the U.K.’s Government Digital Services. If the new digital government team housed with the U.S. General Services Administration gets it right, they’ll succeed in building 21st century citizen services by failing fast instead of failing big, as the Center for Medicare and Medicaid Services memorably did last year with Healthcare.gov through poor planning and oversight and Social Security has this summer. One of the lessons learned from the Consumer Financial Protection Bureau‘s successful use of technology is to align open source policy with mission. This week, 18F has done just that, publishing an open source policy on Github that makes open source the default in development:

At 18F, Uncle Sam is hoping to tap the success of the U.K.’s Government Digital Services. If the new digital government team housed with the U.S. General Services Administration gets it right, they’ll succeed in building 21st century citizen services by failing fast instead of failing big, as the Center for Medicare and Medicaid Services memorably did last year with Healthcare.gov through poor planning and oversight and Social Security has this summer. One of the lessons learned from the Consumer Financial Protection Bureau‘s successful use of technology is to align open source policy with mission. This week, 18F has done just that, publishing an open source policy on Github that makes open source the default in development:

The default position of 18F when developing new projects is to:

1. Use Free and Open Source Software (FOSS), which is software that does not charge users a purchase or licensing fee for modifying or redistributing the source code, in our projects and contribute back to the open source community.

2. Create an environment where any project can be developed in the open.

3. Publish publicly all source code created or modified by 18F, whether developed in-house by government staff or through contracts negotiated by 18F.

Eric Mill and Raphael Majma published a post on Tumblr that explained what FOSS, the policy, 18F’s open source team, approach and teased forthcoming guidelines for reuse:

FOSS is software that does not charge users a purchase or licensing fee for modifying or redistributing the source code. There are many benefits to using FOSS, including allowing for product customization and better interoperability between products. Citizen and consumer needs can change rapidly. FOSS allows us to modify software iteratively and to quickly change or experiment as needed.

Similarly, openly publishing our code creates cost-savings for the American people by producing a more secure, reusable product. Code that is available online for the public to inspect is open to a more rigorous review process that can assist in identifying flaws in the source code. Developing in the open, when appropriate, opens the project up to that review process earlier and allows for discussions to guide the direction of a products development. This creates a distinct advantage over proprietary software that undergoes a less diverse review and provides 18F with an opportunity to engage our stakeholders in ways that strengthen our work.

The use of open source software is not new in the Federal Government. Agencies have been using open source software for many years to great effect. What fewer agencies do is publish developed source code or develop in the open. When the Food and Drug Administration built out openFDA, an API that lets you query adverse drug events, they did so in the open. Because the source code was being published online to the public, a volunteer was able to review the code and find an issue. The volunteer not only identified the issue, but provided a solution to the team that was accepted as a part of the final product. Our policy hopes to recreate these kinds of public interactions and we look forward to other offices within the Federal Government joining us in working on FOSS projects.

In the next few days, we’re excited to publish a contributor’s guide about reuse and sharing of our code and some advice on working in the open from day one.

IMAGE CREDIT: mil-oss.org

The 2012-2013 influenza season has been a bad one, with flu reaching epidemic levels in the United States. Continue reading

The post-industrial future of journalism is already here. It’s just not evenly distributed yet. The same trends changing journalism and society have the potential to create significant social change throughout the African continent, as states moves from conditions of information scarcity to abundance.

That reality was clear on my recent trip to Africa, where I had the opportunity to interview Justin Arenstein at length during my visit to Zanzibar. Arenstein is building the capacity of African media to practice data-driven journalism, a task that has taken on new importance as the digital disruption that has permanently altered how we discover, read, share and participate in news.

One of the primary ways he’s been able to build that capacity is through African News Innovation Challenge (ANIC), a variety of the Knight News Challenge in the United States.

One of the primary ways he’s been able to build that capacity is through African News Innovation Challenge (ANIC), a variety of the Knight News Challenge in the United States.

The 2011 Knight News Challenge winners illustrated data’s ascendance in media and government, with platforms for data journalism and civic connections dominating the field.

As I wrote last September, the projects that the Knight Foundation has chosen to fund over the last two years are notable examples of working on stuff that matters: they represent collective investments in digital civic infrastructure.

The first winners of the African News Innovation Challenge, which concluded this winter, look set to extend that investment throughout the continent of Africa.

“Africa’s media face some serious challenges, and each of our winners tries to solve a real-world problem that journalists are grappling with. This includes the public’s growing concern about the manipulation and accuracy of online content, plus concerns around the security of communications and of whistleblowers or journalistic sources,” wrote Arenstein on the News Challenge blog.

While the twenty 2012 winners include investigative journalism tools and whistleblower security, there’s also a focus on citizen engagement, digitization and making public data actionable. To put it another way, the “news innovation” that’s being funded on both continents isn’t just gathering and disseminating information: it’s now generating data and putting it to work in the service of the needs of residents or the benefit of society.

“The other major theme evident in many of the 500 entries to ANIC is the realisation that the media needs better ways to engage with audiences,” wrote Arenstein. “Many of our winners try tackle this, with projects ranging from mobile apps to mobilise citizens against corruption, to improved infographics to better explain complex issues, to completely new platforms for beaming content into buses and taxis, or even using drone aircraft to get cameras to isolated communities.”

In the first half of our interview, published last year at Radar, Arenstein talked about Hacks/Hackers, and expanding the capacity of data journalism. In the second half, below, we talk about his work at African Media Initiative (AMI), the role of open source in civic media, and how an unconference model for convening people is relevant to innovation.

Justin Arenstein: The AMI has been going on for just over three years. It’s a fairly young organization, and I’ve been embedded now for about 18 months. The major deliverables and the major successes so far have been:

The idea is that we test ideas that are allowed to fail. We fund them in newsrooms and they’re driven by newsrooms. We match them up with technologists. We try and lower the barrier for companies to start experimenting and try and minimize risk as much as possible for them. We’ve launched a couple of slightly larger funds for helping to scale some of these ideas. We’ve just started work on a social venture or a VC fund as well.

Justin Arenstein: Africa hasn’t had the five-year kind of evolutionary growth that the Knight News Challenge has had in the U.S. What the News Challenge has done in the U.S. is effectively grown an ecosystem where newsrooms started to grapple with and accepted the reality that they have to innovate. They have to experiment. Digital is core to the way that they’re not only pushing news out but to the way that they produce it and the way that they process it.

We haven’t had any of that evolution yet in Africa. When you think about digital news in African media, they think you’re speaking about social media or a website. We’re almost right back at where the News Challenge started originally. At the moment, what we’re trying to do is raise sensitivity to the fact that there are far more efficient ways of gathering, ingesting, processing and then publishing digital content — and building tools that are specifically suited for the African environment.

There are bandwidth issues. There are issues around literacy, language use and also, in some cases, very different traditions of producing news. The output of what would be considered news in Africa might not be considered news product in some Western markets. We’re trying to develop products to deal with those gaps in the ecosystem.

Justin Arenstein: Some of the projects that we thought were particularly strong or apt amongst the African News Challenge finalists included more efficient or more integrated ways to manage workflow. If you look at many of the workflow software suites in the north, they’re, by African standards, completely unaffordable. As a result, there hasn’t been any systemic way that media down here produced news, which means that there’s virtually no way that they are storing and managing content for repackaging and for multi-platform publishing.

We’re looking at ways of not reinventing a CMS [content management system], but actually managing and streamlining workflow from ingesting reporting all the way to publishing.

Justin Arenstein: I think I may have I misspoken by saying “content management systems.” I’m referring to managing, gathering and storing old news, the production and the writing of new content, a three or four phase editing process, and then publishing across multiple platforms. Ingesting creative design, layout, and making packages into podcasting or radio formats, and then publishing into things like Drupal or WordPress.

There have been attempts to take existing CMS systems like Drupal and turn it into a broader, more ambitious workflow management tool. We haven’t seen very many successful ones. A lot of the kinds of media that we work with are effectively offline media, so these have been very lightweight applications.

The one thing that we have focused on is trying to “future-proof” it, to some extent, by building a lot of meta tagging and data management tools into these new products. That’s because we’re also trying to position a lot of the media partners we’re working with to be able to think about their businesses as data or content-driven businesses, as opposed to producing newspapers or manufacturing businesses. This seems to be working well in some early pilots we’ve been doing in Kenya.

Justin Arenstein: A big goal that we think we’ve achieved was to try and build a community of use. We put people together. We deliberately took them to an exotic location, far away from a town or location, where they’re effectively held hostage in a hotel. We built in as much free time as possible, with many opportunities to socialize, so that they start creating bonds. Right from the beginning, we did a “speed dating” kind of thing. There’s been very few presentations — in fact, there was only one PowerPoint in five days. The rest of the time, it’s actually the participants teaching each other.

We brought in some additional technology experts or facilitators, but they were handpicked largely from previous challenges to share the experience of going through a similar process and to point people to existing resources that they might not be aware of. That seems to have worked very well.

On the sidelines of the Tech Camp, we’ve seen additional collaborations happen for which people are not asking for funding. It just makes logical sense. We’ve already seen some of the initial fruits of that: three of the applicants actually partnered and merged their applications. We’ve seen a workflow editorial CMS project partner up with an ad booking and production management system, to create a more holistic suite. They’re still building as two separate teams, but they’re now sharing standards and they’re building them as modular products that could be sold as a broader product suite.

Justin Arenstein: We’ve tried to tap into quite a few of them. Some of the more recent tools are transferable. I think there was grand realization that people weren’t able to deliver on their promises — and where they did deliver on tools, there wasn’t documentation. The code was quite messy. They weren’t really robust. Often, applications were written for specific local markets or data requirements that didn’t transfer. You actually effectively had to rebuild them. We have been able to re-purpose DocumentCloud and some other tools.

I think we’ve learned from that process. What we’re trying to do with our News Challenge is to workshop finalists quite aggressively before they put in their final proposals.

Firstly, make sure that they’re being realistic, that they’re not unnecessarily building components, or wasting money and energy on building components for their project that are not unique, not revolutionary or innovative. They should try and almost “plug and play” with what already exists in the ecosystem, and then concentrate on building the new extensions, the real kind of innovations. We’re trying to improve on the Knight model.

Secondly, once the grantees actually get money, it comes in a tranche format so they agree to an implementation plan. They get cash, in fairly small grants by Knight standards. The maximum is $100,000. In addition, they get engineering or programming support from external developers that are on our payroll, working out of our labs. We’ve got a civic lab running out of Kenya and partners, such as Google.

Thirdly, they get business mentorship support from some leading commercial business consultants. These aren’t nonprofit types. These are people who are already advising some of the largest media companies in the world.

The idea is that, through that process, we’re hopefully going to arrive at a more realistic set of projects that have either sustainable revenue models and scaling plans, from the beginning, or built-in mechanisms for assessments, reporting back and learning, if they’re designed purely as experiments.

We’re not certain if it’s going to work. It’s an experiment. On the basis of the Tech Camp that we’ve gone through, it seems to have worked very well. We’ve seen people abandon what were, we thought, overly ambitious technology plans and rather matched up or partnered with existing technologists. They will still achieve their goals but do so in a more streamlined, agile manner by re-purposing existing tech.

Editors’s Note: This interview is part of an ongoing series at the O’Reilly Radar on the people, tools and techniques driving data journalism.