April 4, 2014 meeting of PCAST at National Academy of Sciences

This week, the President’s Council of Advisors on Science and Technology (PCAST) met to discuss and vote to approve a new report on big data and privacy.

UPDATE: The White House published the findings of its review on big data today, including the PCAST review of technologies underpinning big data (PDF), discussed below.

As White House special advisor John Podesta noted in January, the PCAST has been conducting a study “to explore in-depth the technological dimensions of the intersection of big data and privacy.” Earlier this week, the Associated Press interviewed Podesta about the results of the review, reporting that the White House had learned of the potential for discrimination through the use of data aggregation and analysis. These are precisely the privacy concerns that stem from data collection that I wrote about earlier this spring. Here’s the PCAST’s list of “things happening today or very soon” that provide examples of technologies that can have benefits but pose privacy risks:

Pioneered more than a decade ago, devices mounted on utility poles are able to sense the radio stations

being listened to by passing drivers, with the results sold to advertisers.26

In 2011, automatic license‐plate readers were in use by three quarters of local police departments

surveyed. Within 5 years, 25% of departments expect to have them installed on all patrol cars, alerting

police when a vehicle associated with an outstanding warrant is in view.27 Meanwhile, civilian uses of

license‐plate readers are emerging, leveraging cloud platforms and promising multiple ways of using the

information collected.28

Experts at the Massachusetts Institute of Technology and the Cambridge Police Department have used a

machine‐learning algorithm to identify which burglaries likely were committed by the same offender,

thus aiding police investigators.29

Differential pricing (offering different prices to different customers for essentially the same goods) has

become familiar in domains such as airline tickets and college costs. Big data may increase the power

and prevalence of this practice and may also decrease even further its transparency.30

reSpace offers machine‐learning algorithms to the gaming industry that may detect

early signs of gambling addiction or other aberrant behavior among online players.31

Retailers like CVS and AutoZone analyze their customers’ shopping patterns to improve the layout of

their stores and stock the products their customers want in a particular location.32 By tracking cell

phones, RetailNext offers bricks‐and‐mortar retailers the chance to recognize returning customers, just

as cookies allow them to be recognized by on‐line merchants.33 Similar WiFi tracking technology could

detect how many people are in a closed room (and in some cases their identities).

The retailer Target inferred that a teenage customer was pregnant and, by mailing her coupons

intended to be useful, unintentionally disclosed this fact to her father.34

The author of an anonymous book, magazine article, or web posting is frequently “outed” by informal

crowd sourcing, fueled by the natural curiosity of many unrelated individuals.35

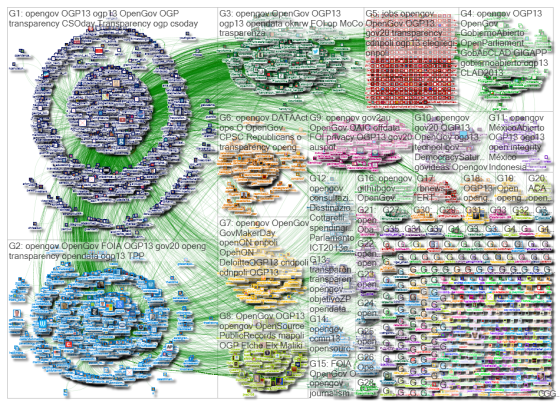

Social media and public sources of records make it easy for anyone to infer the network of friends and

associates of most people who are active on the web, and many who are not.36

Marist College in Poughkeepsie, New York, uses predictive modeling to identify college students who are

at risk of dropping out, allowing it to target additional support to those in need.37

The Durkheim Project, funded by the U.S. Department of Defense, analyzes social‐media behavior to

detect early signs of suicidal thoughts among veterans.38

LendUp, a California‐based startup, sought to use nontraditional data sources such as social media to

provide credit to underserved individuals. Because of the challenges in ensuring accuracy and fairness,

however, they have been unable to proceed.

The PCAST meeting was open to the public through a teleconference line. I called in and took rough notes on the discussion of the forthcoming report as it progressed. My notes on the comments of professors Susan Graham and Bill Press offer sufficient insight and into the forthcoming report, however, that I thought the public value of publishing them was warranted today, given the ongoing national debate regarding data collection, analysis, privacy and surveillance. The following should not be considered verbatim or an official transcript. The emphases below are mine, as are the words of [brackets]. For that, look for the PCAST to make a recording and transcript available online in the future, at its archive of past meetings.

Susan Graham: Our charge was to look at confluence of big data and privacy, to summarize current tech and the way technology is moving in foreseeable future, including its influence the way we think about privacy.

Susan Graham: Our charge was to look at confluence of big data and privacy, to summarize current tech and the way technology is moving in foreseeable future, including its influence the way we think about privacy.

The first thing that’s very very obvious is that personal data in electronic form is pervasive. Traditional data that was in health and financial [paper] records is now electronic and online. Users provide info about themselves in exchange for various services. They use Web browsers and share their interests. They provide information via social media, Facebook, LinkedIn, Twitter. There is [also] data collected that is invisible, from public cameras, microphones, and sensors.

What is unusual about this environment and big data is the ability to do analysis in huge corpuses of that data. We can learn things from the data that allow us to provide a lot of societal benefits. There is an enormous amount of patient data, data about about disease, and data about genetics. By putting it together, we can learn about treatment. With enough data, we can look at rare diseases, and learn what has been effective. We could not have done this otherwise.

We can analyze more online information about education and learning, not only MOOCs but lots of learning environments. [Analysis] can tell teachers how to present material effectively, to do comparisons about whether one presentation of information works better than another, or analyze how well assessments work with learning styles.

Certain visual information is comprehensible, certain verbal information is hard to understand. Understanding different learning styles [can enable] develop customized teaching.

The reason this all works is the profound nature of analysis. This is the idea of data fusion, where you take multiple sources of information, combine them, which provides much richer picture of some phenomenon. If you look at patterns of human movements on public transport, or pollution measures, or weather, maybe we can predict dynamics caused by human context.

We can use statistics to do statistics-based pattern recognition on large amounts of data. One of the things that we understand about this statistics-based approach is that it might not be 100% accurate if map down to the individual providing data in these patterns. We have to very careful not to make mistakes about individuals because we make [an inference] about a population.

How do we think about privacy? We looked at it from the point of view of harms. There are a variety of ways in which results of big data can create harm, including inappropriate disclosures [of personal information], potential discrimination against groups, classes, or individuals, and embarrassment to individuals or groups.

We turned to what tech has to offer in helping to reduce harms. We looked at a number of technologies in use now. We looked at a bunch coming down the pike. We looked at several tech in use, some of which become less effective because of pervasivesness [of data] and depth of analytics.

We traditionally have controlled [data] collection. We have seen some data collection from cameras and sensors that people don’t know about. If you don’t know, it’s hard to control.

Tech creates many concerns. We have looked at methods coming down the pike. Some are more robust and responsive. We have a number of draft recommendations that we are still working out.

Part of privacy is protecting the data using security methods. That needs to continue. It needs to be used routinely. Security is not the same as privacy, though security helps to protect privacy. There are a number of approaches that are now used by hand that with sufficient research could be automated could be used more reliably, so they scale.

There needs to be more research and education about education about privacy. Professionals need to understand how to treat privacy concerns anytime they deal with personal data. We need to create a large group of professionals who understand privacy, and privacy concerns, in tech.

Technology alone cannot reduce privacy risks. There has to be a policy as well. It was not our role to say what that policy should be. We need to lead by example by using good privacy protecting practices in what the government does and increasingly what the private sector does.

Bill Press: We tried throughout to think of scenarios and examples. There’s a whole chapter [in the report] devoted explicitly to that.

Bill Press: We tried throughout to think of scenarios and examples. There’s a whole chapter [in the report] devoted explicitly to that.

They range from things being done today, present technology, even though they are not all known to people, to our extrapolations to the outer limits, of what might well happen in next ten years. We tried to balance examples by showing both benefits, they’re great, and they raise challenges, they raise the possibility of new privacy issues.

In another aspect, in Chapter 3, we tried to survey technologies from both sides, with both tech going to bring benefits, those that will protect [people], and also those that will raise concerns.

In our technology survey, we were very much helped by the team at the National Science Foundation. They provided a very clear, detailed outline of where they thought that technology was going.

This was part of our outreach to a large number of experts and members of the public. That doesn’t mean that they agree with our conclusions.

Eric Lander: Can you take everybody through analysis of encryption? Are people using much more? What are the limits?

Graham: The idea behind classical encryption is that when data is stored, when it’s sitting around in a database, let’s say, encryption entangles the representation of the data so that it can’t be read without using a mathematical algorithm and a key to convert a seemingly set of meaningless set of bits into something reasonable.

The same technology, where you convert and change meaningless bits, is used when you send data from one place to another. So, if someone is scanning traffic on internet, you can’t read it. Over the years, we’ve developed pretty robust ways of doing encryption.

The weak link is that to use data, you have to read it, and it becomes unencrypted. Security technologists worry about it being read in the short time.

Encryption technology is vulnerable. The key that unlocks the data is itself vulnerable to theft or getting the wrong user to decrypt.

Both problems of encryption are active topics of research on how to use data without being able to read it. There research on increasingly robustness of encryption, so if a key is disclosed, you haven’t lost everything and you can protect some of data or future encryption of new data. This reduces risk a great deal and is important to use. Encryption alone doesn’t protect.

Unknown Speaker: People read of breaches derived from security. I see a different set of issues of privacy from big data vs those in security. Can you distinguish them?

Bill Press: Privacy and security are different issues. Security is necessary to have good privacy in the technological sense if communications are insecure, they clearly can’t be private. This goes beyond, to where parties that are authorized, in a security sense, to see the information. Privacy is much closer to values. security is much closer to protocols.

Interesting thing is that this is less about purely tech elements — everyone can agree on right protocol, eventually. These things that go beyond and have to do with values.